This software quickly extracts content (text, html, or markdown), specific divs, and spans from websites and saves them in csv or json formats.

One of the great features of WebScraper is the flexibility it offers in terms of the data it extracts. In addition to basic meta data, this tool can also retrieve the entire content of each page (as text, HTML or markdown) and selectively extract specific parts of pages using named classes, IDs, or item props of divs, spans, dds or ps.

Another big advantage of WebScraper is its ease of use. Getting started is as simple as entering a starting URL and hitting the "Go" button. And once you're up and running, the software offers a range of export options, with checkboxes that make it easy to select the columns you want.

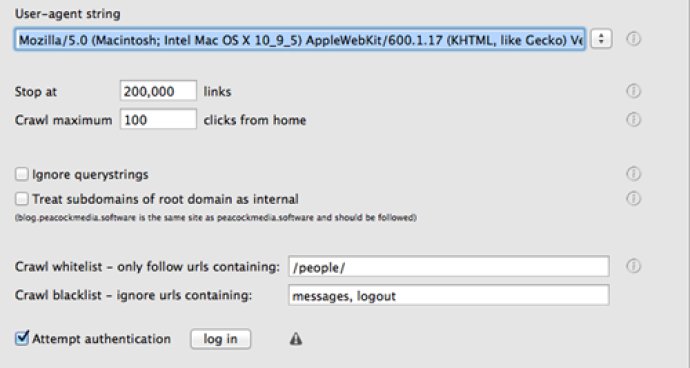

In addition to its user-friendly interface, WebScraper also offers plenty of advanced options for power users. This includes the ability to configure various limits on the crawl and output file size, ensuring that you get the results you need without bogging down your system.

Overall, we found WebScraper to be a powerful and versatile tool that delivers excellent results. And with its free trial offer and responsive customer support team, we would highly recommend this tool to anyone in need of a dependable web scraping solution.

Version 4.15.3:

Adds setting 'Legacy webview'. The new default = use the up-to-date WebKit webview for rendering, however, the legacy version may work better in some cases

The setting 'Attempt authentication' has been relabeled 'handle cookies'